IEEE’s Microwave Society Gets a New Name

In

our pilot examine, we draped a thin, versatile electrode array more than the floor of the volunteer’s brain. The electrodes recorded neural signals and despatched them to a speech decoder, which translated the alerts into the phrases the person intended to say. It was the initial time a paralyzed human being who couldn’t talk experienced used neurotechnology to broadcast complete words—not just letters—from the mind.

That trial was the fruits of far more than a ten years of investigation on the fundamental brain mechanisms that govern speech, and we’re enormously very pleased of what we have accomplished so significantly. But we’re just having began.

My lab at UCSF is performing with colleagues all around the entire world to make this technological know-how risk-free, secure, and reliable more than enough for every day use at property. We’re also working to enhance the system’s performance so it will be well worth the effort.

How neuroprosthetics do the job

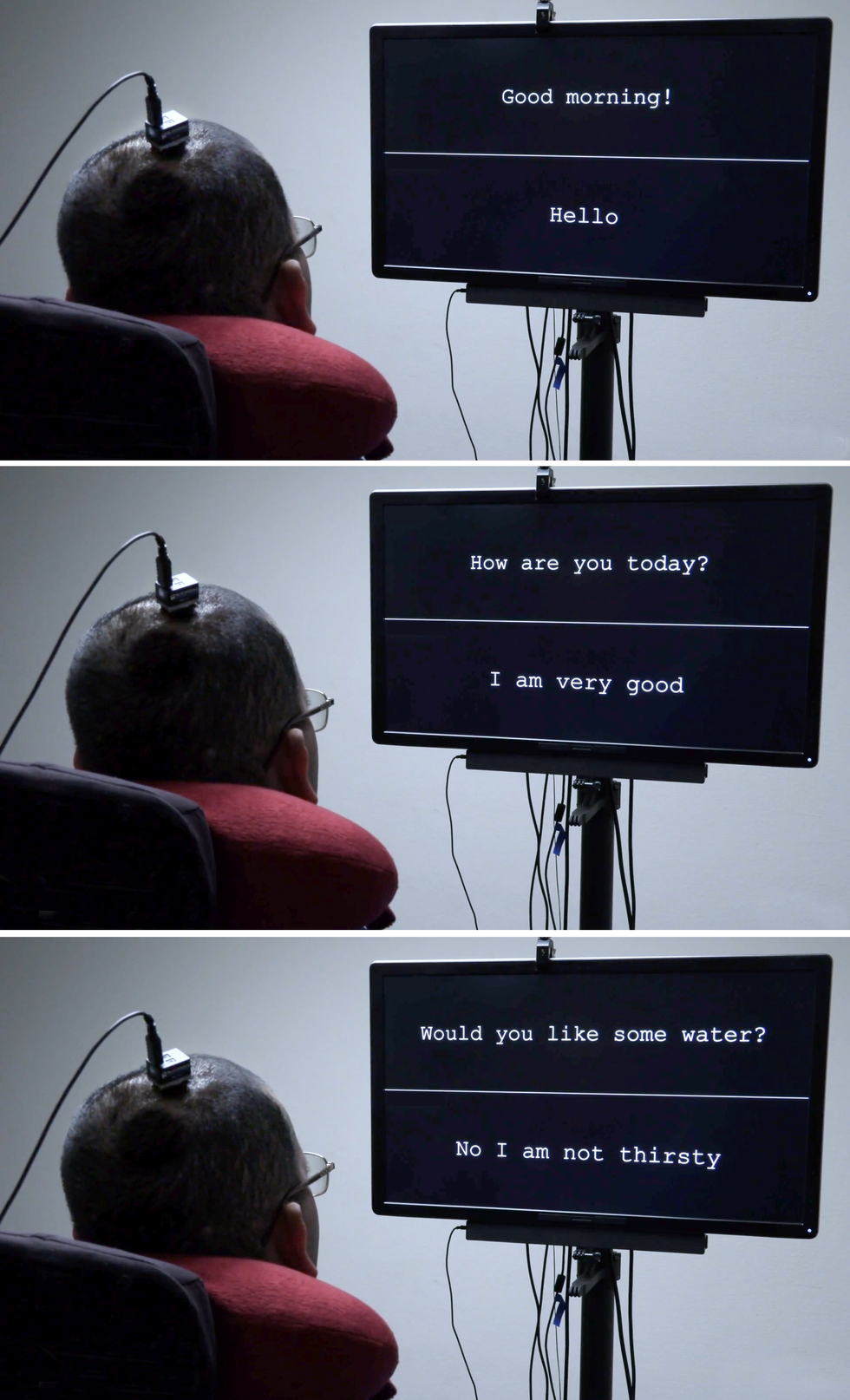

The 1st edition of the mind-computer interface gave the volunteer a vocabulary of 50 realistic phrases. University of California, San Francisco

The 1st edition of the mind-computer interface gave the volunteer a vocabulary of 50 realistic phrases. University of California, San Francisco

Neuroprosthetics have appear a extensive way in the previous two many years. Prosthetic implants for listening to have innovative the furthest, with patterns that interface with the

cochlear nerve of the interior ear or directly into the auditory brain stem. There’s also considerable exploration on retinal and brain implants for eyesight, as nicely as initiatives to give folks with prosthetic hands a sense of contact. All of these sensory prosthetics take information and facts from the exterior entire world and convert it into electrical alerts that feed into the brain’s processing facilities.

The reverse form of neuroprosthetic records the electrical exercise of the mind and converts it into signals that management one thing in the outdoors earth, this sort of as a

robotic arm, a video-activity controller, or a cursor on a laptop screen. That previous handle modality has been utilized by teams such as the BrainGate consortium to allow paralyzed people today to kind words—sometimes one letter at a time, at times using an autocomplete purpose to pace up the process.

For that typing-by-brain functionality, an implant is typically placed in the motor cortex, the component of the brain that controls motion. Then the consumer imagines specific bodily actions to control a cursor that moves above a digital keyboard. A further method, pioneered by some of my collaborators in a

2021 paper, had a single person think about that he was holding a pen to paper and was creating letters, building signals in the motor cortex that were being translated into text. That technique set a new record for velocity, enabling the volunteer to create about 18 text for each moment.

In my lab’s investigate, we’ve taken a far more ambitious strategy. Instead of decoding a user’s intent to go a cursor or a pen, we decode the intent to control the vocal tract, comprising dozens of muscle tissues governing the larynx (frequently identified as the voice box), the tongue, and the lips.

The seemingly basic conversational setup for the paralyzed person [in pink shirt] is enabled by both of those innovative neurotech components and device-mastering techniques that decode his brain signals. University of California, San Francisco

The seemingly basic conversational setup for the paralyzed person [in pink shirt] is enabled by both of those innovative neurotech components and device-mastering techniques that decode his brain signals. University of California, San Francisco

I began performing in this space more than 10 many years back. As a neurosurgeon, I would generally see individuals with intense injuries that still left them not able to converse. To my shock, in lots of conditions the areas of mind accidents didn’t match up with the syndromes I realized about in professional medical college, and I understood that we continue to have a whole lot to learn about how language is processed in the mind. I resolved to study the underlying neurobiology of language and, if attainable, to establish a brain-device interface (BMI) to restore conversation for persons who have dropped it. In addition to my neurosurgical qualifications, my staff has expertise in linguistics, electrical engineering, laptop or computer science, bioengineering, and medicine. Our ongoing medical demo is testing both equally hardware and computer software to discover the boundaries of our BMI and ascertain what sort of speech we can restore to people today.

The muscle tissues involved in speech

Speech is a single of the behaviors that

sets humans aside. A good deal of other species vocalize, but only people blend a set of seems in myriad unique strategies to symbolize the environment all-around them. It’s also an extraordinarily challenging motor act—some authorities believe it’s the most complex motor action that people today carry out. Talking is a product or service of modulated air stream by way of the vocal tract with every utterance we condition the breath by making audible vibrations in our laryngeal vocal folds and changing the form of the lips, jaw, and tongue.

Many of the muscles of the vocal tract are pretty in contrast to the joint-based mostly muscle mass such as individuals in the arms and legs, which can transfer in only a few prescribed means. For case in point, the muscle mass that controls the lips is a sphincter, though the muscular tissues that make up the tongue are ruled additional by hydraulics—the tongue is mainly composed of a set quantity of muscular tissue, so going one portion of the tongue alterations its form somewhere else. The physics governing the movements of this kind of muscles is fully distinctive from that of the biceps or hamstrings.

Due to the fact there are so numerous muscular tissues included and they just about every have so many levels of flexibility, there is basically an infinite quantity of probable configurations. But when persons communicate, it turns out they use a relatively small established of main movements (which vary relatively in distinctive languages). For illustration, when English speakers make the “d” seem, they place their tongues at the rear of their tooth when they make the “k” sound, the backs of their tongues go up to touch the ceiling of the back of the mouth. Couple of people are acutely aware of the exact, intricate, and coordinated muscle mass steps needed to say the most straightforward phrase.

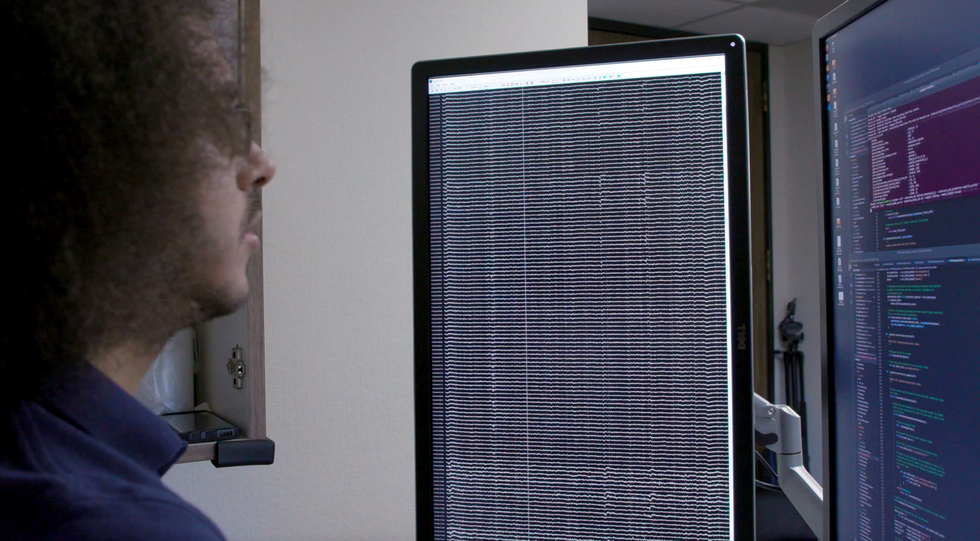

Team member David Moses appears to be at a readout of the patient’s mind waves [left screen] and a screen of the decoding system’s activity [right screen].College of California, San Francisco

Team member David Moses appears to be at a readout of the patient’s mind waves [left screen] and a screen of the decoding system’s activity [right screen].College of California, San Francisco

My exploration group focuses on the elements of the brain’s motor cortex that send out motion commands to the muscles of the face, throat, mouth, and tongue. These mind locations are multitaskers: They control muscle mass movements that make speech and also the movements of all those exact same muscular tissues for swallowing, smiling, and kissing.

Studying the neural exercise of those areas in a practical way needs the two spatial resolution on the scale of millimeters and temporal resolution on the scale of milliseconds. Historically, noninvasive imaging programs have been ready to deliver a person or the other, but not the two. When we begun this investigation, we identified remarkably minimal details on how mind action patterns had been connected with even the easiest parts of speech: phonemes and syllables.

Here we owe a credit card debt of gratitude to our volunteers. At the UCSF epilepsy middle, people planning for surgical procedure typically have electrodes surgically positioned around the surfaces of their brains for many days so we can map the locations associated when they have seizures. Through these couple of times of wired-up downtime, lots of clients volunteer for neurological exploration experiments that make use of the electrode recordings from their brains. My team requested patients to enable us analyze their designs of neural action though they spoke text.

The hardware concerned is called

electrocorticography (ECoG). The electrodes in an ECoG program don’t penetrate the mind but lie on the floor of it. Our arrays can comprise numerous hundred electrode sensors, just about every of which documents from hundreds of neurons. So much, we have utilized an array with 256 channels. Our intention in people early studies was to learn the designs of cortical activity when folks speak basic syllables. We questioned volunteers to say precise sounds and words although we recorded their neural styles and tracked the actions of their tongues and mouths. In some cases we did so by possessing them use coloured deal with paint and applying a computer system-eyesight method to extract the kinematic gestures other situations we employed an ultrasound machine positioned less than the patients’ jaws to image their shifting tongues.

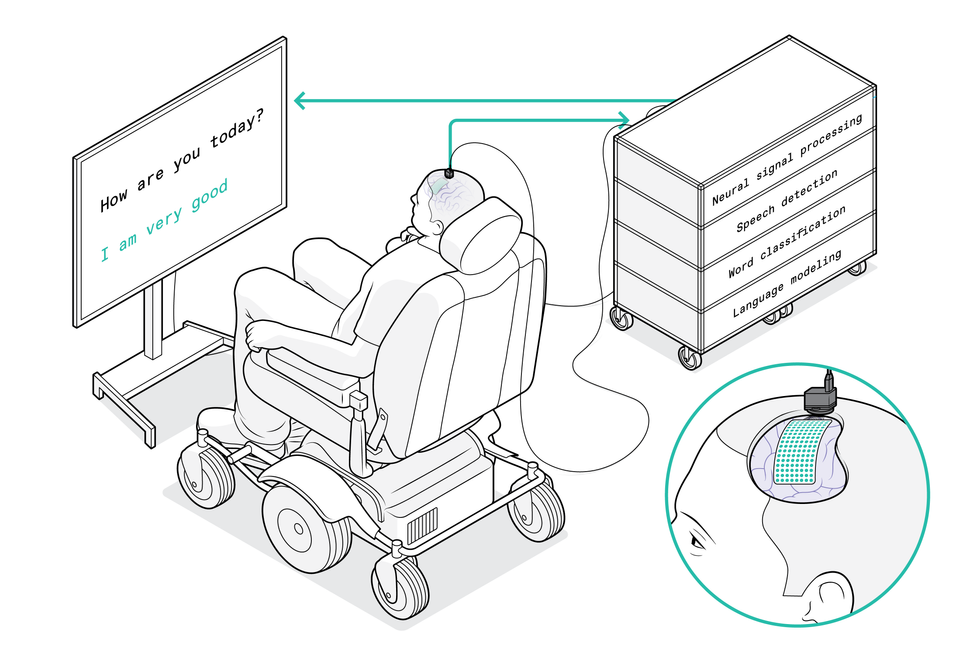

The system commences with a flexible electrode array that is draped more than the patient’s brain to decide on up signals from the motor cortex. The array specifically captures motion commands intended for the patient’s vocal tract. A port affixed to the cranium guides the wires that go to the computer system method, which decodes the brain signals and interprets them into the words and phrases that the affected person wishes to say. His solutions then surface on the display screen display.Chris Philpot

The system commences with a flexible electrode array that is draped more than the patient’s brain to decide on up signals from the motor cortex. The array specifically captures motion commands intended for the patient’s vocal tract. A port affixed to the cranium guides the wires that go to the computer system method, which decodes the brain signals and interprets them into the words and phrases that the affected person wishes to say. His solutions then surface on the display screen display.Chris Philpot

We utilized these programs to match neural styles to actions of the vocal tract. At initially we experienced a good deal of issues about the neural code. 1 possibility was that neural action encoded directions for specific muscular tissues, and the brain effectively turned these muscle groups on and off as if pressing keys on a keyboard. A further plan was that the code established the velocity of the muscle contractions. Nonetheless one more was that neural exercise corresponded with coordinated designs of muscle mass contractions employed to create a specified audio. (For instance, to make the “aaah” audio, both the tongue and the jaw require to drop.) What we found was that there is a map of representations that controls distinct sections of the vocal tract, and that together the distinct mind spots combine in a coordinated method to give rise to fluent speech.

The job of AI in today’s neurotech

Our perform is dependent on the advances in synthetic intelligence above the previous 10 years. We can feed the data we gathered about each neural activity and the kinematics of speech into a neural community, then enable the equipment-studying algorithm uncover styles in the associations among the two details sets. It was probable to make connections concerning neural action and manufactured speech, and to use this model to create laptop or computer-generated speech or textual content. But this procedure could not coach an algorithm for paralyzed folks since we’d deficiency 50 % of the details: We’d have the neural designs, but nothing about the corresponding muscle movements.

The smarter way to use machine studying, we understood, was to break the issue into two steps. 1st, the decoder translates signals from the mind into meant actions of muscles in the vocal tract, then it translates those meant actions into synthesized speech or text.

We call this a biomimetic method because it copies biology in the human overall body, neural action is instantly responsible for the vocal tract’s actions and is only indirectly liable for the appears made. A significant benefit of this method will come in the teaching of the decoder for that second step of translating muscle mass actions into sounds. Mainly because all those interactions amongst vocal tract movements and seem are relatively universal, we were being capable to prepare the decoder on big data sets derived from people today who weren’t paralyzed.

A scientific trial to check our speech neuroprosthetic

The future huge obstacle was to provide the technological innovation to the persons who could actually reward from it.

The Countrywide Institutes of Wellness (NIH) is funding

our pilot trial, which commenced in 2021. We already have two paralyzed volunteers with implanted ECoG arrays, and we hope to enroll far more in the coming decades. The principal aim is to enhance their interaction, and we’re measuring functionality in conditions of phrases for each minute. An normal adult typing on a full keyboard can form 40 phrases per moment, with the quickest typists reaching speeds of extra than 80 phrases for each moment.

Edward Chang was encouraged to create a mind-to-speech procedure by the sufferers he encountered in his neurosurgery observe. Barbara Ries

Edward Chang was encouraged to create a mind-to-speech procedure by the sufferers he encountered in his neurosurgery observe. Barbara Ries

We assume that tapping into the speech method can give even far better success. Human speech is a lot more rapidly than typing: An English speaker can effortlessly say 150 phrases in a minute. We’d like to allow paralyzed folks to communicate at a price of 100 words and phrases for every minute. We have a whole lot of get the job done to do to reach that intention, but we think our strategy makes it a feasible target.

The implant technique is program. First the surgeon gets rid of a tiny portion of the skull future, the adaptable ECoG array is gently positioned throughout the surface of the cortex. Then a modest port is set to the skull bone and exits through a separate opening in the scalp. We at present will need that port, which attaches to exterior wires to transmit facts from the electrodes, but we hope to make the process wi-fi in the upcoming.

We’ve thought of employing penetrating microelectrodes, because they can record from lesser neural populations and could consequently present additional detail about neural activity. But the current hardware isn’t as strong and safe as ECoG for scientific purposes, particularly more than quite a few a long time.

A different consideration is that penetrating electrodes usually require day by day recalibration to turn the neural signals into clear instructions, and study on neural equipment has demonstrated that pace of set up and general performance dependability are crucial to finding people today to use the know-how. Which is why we have prioritized stability in

developing a “plug and play” system for long-term use. We done a examine looking at the variability of a volunteer’s neural alerts about time and found that the decoder carried out improved if it employed information designs throughout various periods and many times. In equipment-studying phrases, we say that the decoder’s “weights” carried around, generating consolidated neural signals.

https://www.youtube.com/look at?v=AfX-fH3A6BsCollege of California, San Francisco

Since our paralyzed volunteers can not discuss while we look at their mind styles, we asked our initially volunteer to try out two distinct ways. He began with a checklist of 50 text that are useful for each day life, such as “hungry,” “thirsty,” “please,” “help,” and “computer.” Throughout 48 classes around various months, we in some cases questioned him to just imagine expressing each of the phrases on the record, and sometimes requested him to overtly

test to say them. We discovered that tries to communicate created clearer brain indicators and were sufficient to train the decoding algorithm. Then the volunteer could use those people terms from the checklist to make sentences of his personal selecting, such as “No I am not thirsty.”

We’re now pushing to expand to a broader vocabulary. To make that perform, we will need to continue to boost the present-day algorithms and interfaces, but I am assured these enhancements will come about in the coming months and a long time. Now that the proof of theory has been recognized, the target is optimization. We can target on building our process faster, additional exact, and—most important— safer and additional responsible. Factors should shift swiftly now.

In all probability the most significant breakthroughs will come if we can get a superior being familiar with of the mind devices we’re seeking to decode, and how paralysis alters their activity. We’ve occur to notice that the neural patterns of a paralyzed man or woman who simply cannot deliver instructions to the muscle tissue of their vocal tract are very various from these of an epilepsy client who can. We’re making an attempt an formidable feat of BMI engineering even though there is however loads to master about the fundamental neuroscience. We imagine it will all arrive collectively to give our patients their voices again.

From Your Website Articles

Relevant Content articles All around the Web